The Trusted AI Bulletin #7

Issue #7 – Scaling AI Responsibly: From Compliance to Competitive Edge

Introduction

In this edition of The Trusted AI Bulletin, we examine the shifting centre of gravity for AI within financial services firms. The newly released 2025 AI Index Report highlights not only the global acceleration in AI development but also the widening gaps in regulatory preparedness and organisational readiness.

Our Co-Founder Thushan Kumaraswamy opens with a perspective on the need for business-led AI ownership — a view increasingly echoed across the industry. As firms move from experimentation to enterprise adoption, clarity around governance, accountability, and value realisation is no longer optional. This issue explores what that shift looks like in practice.

Executive Perspective: Where Should AI Responsibility Live?

Our Co-Founder Thushan Kumaraswamy comments:

"The 2025 AI Index Report raise some interesting challenges regarding Responsible AI (RAI) in business. The use of AI in financial services firms requires cooperation between multiple departments, but ownership of AI remains fragmented. Currently, it sits with information security or data teams, but it really needs to be owned by the business.

It is the business that is paying for the AI systems to be developed and adopted. It is the business that owns the data used by the AI systems. It is the business that (hopefully!) sees the value realised.

I am starting to see more “Head of Responsible AI” noise in financial services firms now with Lloyds Banking Group hiring in Jan 2025, but still not that many and it remains unclear if these kinds of roles are data/tech-related or part of the business.

I get that AI, both from a technical perspective and an operational one, is new for many business leaders, and they struggle to keep up with the daily barrage of innovations. This is where a “Head of AI” should sit; to advise the business on what is possible with AI, to work with data, technology, and infosec teams to ensure that AI systems are used safely, and to ensure that the ROI of AI is at least what is expected.

Specialists can advise on a temporary basis, but in the long-term this must be an in-house role and team, supported by the board, and given the necessary authority to stop AI developments at any stage if they pose uncontrolled risks to the firm or will not deliver the required return."

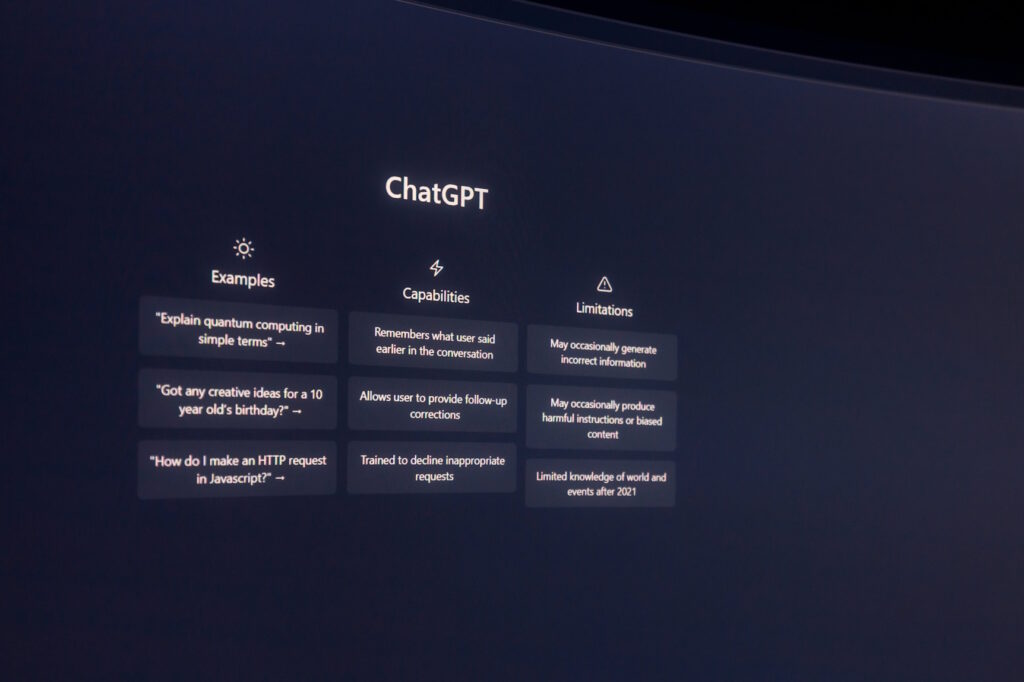

100 AI Use Cases in Financial Services - #1 Chatbot

In last week’s edition, we introduced our series on the 100 AI use cases reshaping financial services — focusing on how firms can move from experimentation to scalable, high-impact adoption.

We kick off the series with one of the most widely adopted and visible AI use cases: chatbots. In financial services, chatbots are transforming how firms interact with customers — delivering faster support, tailored advice, and improved satisfaction. But the benefits come with real risks around data privacy, regulatory compliance, and fairness.

AI Highlights of the Week

1. New AI Index Report Charts Rising Global Stakes—and Regulatory Gaps

The 2025 AI Index Report has just been released by Stanford University, offering a comprehensive snapshot of global trends in artificial intelligence. This year’s report underscores the intensifying race between nations, with the United States still leading in the development of top AI models but China rapidly catching up, especially in research output and patent filings.

The report draws attention to the soaring cost of training cutting-edge models—OpenAI’s GPT-4 is estimated to have cost $78 million—raising questions about who can afford to innovate at this scale. Notably, AI regulation is on the rise: U.S. AI-related laws have grown from just one in 2016 to 25 in 2023, reflecting the increasing pressure on governments to keep pace with technological advancement.

As AI systems become more powerful and embedded in daily life, the findings stress the urgent need for thoughtful, coordinated governance that can balance innovation with accountability. With AI's trajectory showing no signs of slowing, the report serves as a timely reminder that regulatory frameworks must evolve just as swiftly.

Source: 2025 AI Index Report link

2. Brussels Bets Big on AI to Regain Tech Edge and Counter U.S. Tariffs

As the European Union grapples with the ripple effects of American tariffs, Brussels is preparing a major policy shift aimed at transforming Europe into an “AI Continent.” A draft strategy, to be unveiled this week, reveals plans to streamline regulations, reduce compliance burdens, and create a more innovation-friendly environment for AI development.

This charm offensive is a direct response to mounting criticism from Big Tech and global AI leaders, who argue that the EU’s rigid regulatory framework, including the AI Act, is stifling competitiveness. Central to the strategy are massive investments in computing infrastructure — including five AI “gigafactories” — and ambitious targets to boost AI skills among 100 million Europeans by 2030.

The push also seeks to reduce dependence on U.S.-based cloud providers by tripling Europe’s data centre capacity. With only 13 percent of European firms currently adopting AI, the plan signals a timely recalibration of Europe's approach to AI governance — one that recognises the urgent need to lead, not lag, in the global AI race.

Source: Politico link

3. Standard Chartered Embraces Generative AI to Revolutionise Global Operations

Standard Chartered is set to deploy its Generative AI tool, SC GPT, across 41 markets, aiming to enhance operational efficiency and client engagement among its 70,000 employees. This strategic move is expected to boost productivity, personalise sales and marketing efforts, automate software engineering tasks, and refine risk management processes.

A more tailored version is in development to leverage the bank's proprietary data for bespoke problem-solving, while local teams are encouraged to adapt SC GPT to address specific market needs, including digital marketing and customer services. This initiative underscores Standard Chartered's commitment to responsibly harnessing AI, reflecting a broader trend in the financial sector towards integrating advanced technologies.

As AI governance and regulations evolve, such proactive adoption highlights the importance of balancing innovation with ethical considerations in the banking industry.

Source: Finextra link

4. Navigating the AI Revolution: Ensuring Responsible Innovation in UK Financial Services

The integration of AI into financial services is revolutionising the sector, enhancing operations from algorithmic trading to personalised customer interactions. However, this rapid adoption introduces significant regulatory challenges, particularly concerning financial stability and consumer protection.

The UK's Financial Conduct Authority (FCA) has yet to implement comprehensive AI regulations, leading to ambiguity in compliance and oversight. Unregulated AI-driven activities, such as algorithmic trading, could exacerbate market volatility, while biased AI models in credit scoring may disadvantage vulnerable consumers.

To address these issues, financial institutions should proactively enhance AI governance frameworks, prioritising transparency, bias mitigation, and robust cybersecurity measures. Engaging with policymakers to establish clear, forward-thinking regulations is crucial to balance innovation with economic stability.

As AI continues to redefine financial services, the UK's ability to implement effective governance will determine its leadership in this evolving landscape.

Source: HM Strategy link

Industry Insights

Case study – Allianz Scaling Responsible AI Across Global Insurance Operations

Allianz, one of the world’s largest insurers, is taking a leading role in translating Responsible AI principles into real-world practice across its global operations. With nearly 160,000 employees and a presence in more than 70 countries, the company has moved beyond AI experimentation to embed ethical safeguards into scalable AI deployment.

In 2024, Allianz joined the European Commission’s AI Pact, aligning its roadmap with the EU AI Act and signalling its intent to not just comply, but lead on AI governance.

At the core of Allianz’s approach is a practical, organisation-wide AI Risk Management Framework developed in-house. This framework governs all AI and machine learning initiatives, from document processing to customer service automation, with defined roles for model owners, risk teams, and compliance functions.

Key initiatives include:

- An AI Impact Assessment Tool used early in development to flag risks such as discriminatory outcomes, low explainability, or overreliance on sensitive data.

- The Enterprise Knowledge Assistant (EKA), a GenAI-powered tool now used by thousands of service agents to cut resolution times and improve consistency across 10+ countries.

- A strict model registration process and “human-in-the-loop” policy to ensure that critical decisions — like claims rejection or fraud detection — are always overseen by a human.

- Mandatory training for AI Product Owners, with oversight from a central AI governance board embedded in Group Compliance.

These measures are not theoretical. They have enabled Allianz to scale nearly 400 GenAI use cases while maintaining regulatory confidence, internal accountability, and public trust. For Allianz, AI governance is more than risk mitigation — it’s what allows innovation to scale responsibly, without compromising on customer fairness or institutional integrity.

Sources: Allianz link 1, Allianz link 2, WE Forum PDF link

Upcoming Events

1. Gartner Expert Q&A: Practical Guidance on Adapting to the EU AI Act -14 April 2025

This webinar offers valuable insights for businesses navigating the new EU AI regulations. Industry experts will provide actionable advice on how to ensure compliance and unlock opportunities within the evolving AI landscape. It's a must-attend for anyone keen to stay ahead of regulatory changes and ensure their AI strategies are future-proof.

Register now: Gartner link

2. In-Person Event: AI Breakfast & Roundtable – From AI Proof of Concept to Scalable Enterprise Adoption – 23 April 2025

Leading Point is hosting an exclusive AI Breakfast & Roundtable, bringing together AI leaders from top financial institutions, including banks, insurance firms, and buy-side institutions. This intimate, high-level discussion will explore the challenges and opportunities in scaling AI beyond proof of concept to enterprise-wide adoption.

Key discussion points include overcoming implementation barriers, aligning AI initiatives with business objectives, and best practices for AI success in banking, insurance, and investment management. This event offers a unique opportunity to connect with industry peers and gain strategic insights on embedding AI as a core driver of business value.

Want to be a part of the conversation?

If you are an executive with AI responsibilities in business, risk & compliance, or data contact Rajen Madan or Thushan Kumaraswamy to get a seat at the table.

3. In-Person Event: The AI in Business Conference - 15 May 2025

This in-person event offers a unique opportunity to hear from industry leaders across various sectors, providing real-world insights into AI implementation and strategy. Attendees will benefit from a rich agenda of expert sessions and have the chance to network with like-minded professionals, building lasting connections while tackling common challenges in AI.

Plus, the event is co-located with the Digital Transformation Conference, allowing platinum ticket holders to access a broader range of content, deepening their understanding of AI’s role in digital business transformation.

Register now: AI Business Conference link

Conclusion

The themes emerging across this issue point to a maturing AI agenda in financial services: from clearer governance models and responsible scaling, to regulatory recalibration and infrastructure investment. What’s clear is that AI can no longer be treated as a peripheral capability — it must be embedded within core business strategy, with the right controls in place from the outset.

As organisations seek to balance innovation with oversight, the ability to operationalise Responsible AI at scale will define not only compliance readiness but also competitive advantage.

In our next issue, we’ll continue the ‘100 AI Use Cases’ series with a focus on AI in Investment Research — examining how firms are using AI to enhance insight generation, improve analyst productivity, and navigate the risks of model-driven decision-making.

The Trusted AI Bulletin #6

Issue #6 – The AI Policy Pulse: Balancing Risk, Trust & Progress

Introduction

Welcome to this edition of The Trusted AI Bulletin, where we break down the latest shifts in AI policy, regulation, and the evolving landscape of AI adoption.

This week, we explore the debate over AI risk assessments in the EU, as MEPs push back against a proposal that could exempt major tech firms from stricter oversight. We also examine the UK’s latest strategy for regulating AI in financial services and how businesses are navigating the complexities of AI adoption—balancing innovation with compliance. Meanwhile, in government, outdated infrastructure threatens to stall progress, underscoring the need for practical transformation strategies.

With AI becoming ever more embedded in critical systems, the focus is shifting to how organisations can create real value with AI while ensuring responsible governance. From regulatory battles to real-world implementation challenges, these stories highlight the urgent need for a balanced approach—one that drives adoption, fuels transformation, and keeps accountability at its core.

100 AI Use Cases in Financial Services

AI adoption in financial services is no longer a question of if, but where and how. As firms move beyond experimentation, the focus is shifting toward practical, high impact use cases that can drive real operational and strategic value. From front-office customer engagement to back-office automation, the opportunities to embed AI across the business are expanding rapidly.

But with so many possibilities, the challenge lies in identifying where AI can deliver meaningful outcomes — and doing so in a way that’s scalable, compliant, and aligned with the firm’s broader objectives. That’s where a clear view of proven, emerging use cases becomes essential.

Over the coming weeks, we’ll be exploring the 100 AI Use Cases we have identified shaping the future of financial services. For each, we’ll look at the models involved, the data required, key vendors operating in the space, risk considerations, and examples of where adoption is already underway. The goal is to help senior leaders cut through the noise and focus on the AI opportunities that matter — now and next.

Key Highlights of the Week

1. MEPs Criticise EU's Shift Towards Voluntary AI Risk Assessments

A coalition of Members of the European Parliament (MEPs) has expressed significant concern over the European Commission's proposal to make certain AI risk assessment provisions voluntary, particularly those affecting general-purpose AI systems like ChatGPT and Copilot.

This move could exempt major tech companies from mandatory evaluations of their systems for issues such as discrimination or election interference. The MEPs argue that such a change undermines the AI Act's foundational goals of safeguarding fundamental rights and democracy.

This development highlights the ongoing tension between regulatory bodies and technology firms, especially from the United States, regarding the balance between innovation and ethical oversight in AI governance. The outcome of this debate will be pivotal in shaping the future landscape of AI regulation within the European Union.

Source: Dutch News link

2. UK Financial Regulator Launches Strategy to Balance Risk and Foster Economic Growth

The FCA has launched a new five-year strategy focused on boosting trust, supporting innovation, and improving outcomes for consumers across UK financial services.

By committing to becoming a more data-led, tech-savvy regulator, the FCA aims to strike a better balance between risk and growth—an approach that holds significant implications for the governance of emerging technologies like AI.

Its emphasis on smarter regulation, financial crime prevention, and inclusive consumer support signals a shift toward more agile, forward-looking oversight. For those navigating evolving AI regulations, this strategy reinforces the FCA’s intent to create a regulatory environment that fosters responsible innovation.

Source: FCA link

3. Public Accounts Committee Warns of AI Rollout Challenges Amid Legacy Infrastructure

The UK government's ambitious plans to integrate AI across public services are at risk due to outdated IT infrastructure, poor data quality, and a shortage of skilled personnel.

A report by the Public Accounts Committee (PAC) highlights that over 20 legacy IT systems remain unfunded for necessary upgrades, with nearly a third of central government systems deemed obsolete as of 2024.

Despite intentions to drive economic growth through AI adoption, these foundational weaknesses pose significant challenges. The PAC also raises concerns about persistent digital skills shortages and uncompetitive civil service pay rates, which hinder the recruitment and retention of necessary talent.

Addressing these issues is crucial to ensure that AI initiatives are effectively implemented, fostering public trust and delivering the anticipated benefits of technological advancement.

Source: The Guardian link

Featured Articles

1. Why the UK’s Light-Touch AI Approach Might Not Be Enough

AI regulation in the UK is developing at a cautious pace, with the government opting for a principles-based, sector-led approach rather than comprehensive legislation. While this flexible model aims to foster innovation and reduce regulatory burdens, it risks creating a fragmented landscape where inconsistent standards could undermine public trust and accountability.

The article highlights that regulators often lack the technical expertise and resources to effectively oversee AI, raising concerns about how well current frameworks can keep pace with rapid technological advancements.

Meanwhile, businesses are calling for greater clarity and coherence, especially those operating across borders and facing stricter regimes like the EU AI Act. The UK’s strategy, though well-intentioned, may fall short in addressing the systemic risks posed by AI if coordination and enforcement mechanisms remain weak. For those focused on AI governance, the message is clear: without sharper oversight and alignment, the UK could lag in both trust and competitiveness.

Source: ICAEW link

2. Bridging the AI Knowledge Gap: A Foundation for Responsible Innovation

In an era where artificial intelligence is reshaping everything from financial services to public policy, understanding how AI works is becoming essential—not just for technologists, but for everyone.

As AI systems increasingly influence the decisions we see, the products we use, and even the jobs we do, being AI-literate is no longer a nice-to-have, but a societal imperative. The CFTE AI Literacy White Paper explores why foundational knowledge of AI is critical for individuals, businesses, and governments alike, arguing that AI should be treated as a core component of digital literacy.

What’s particularly compelling is the focus on inclusion—ensuring that access to AI knowledge isn't limited to a technical elite but extended across sectors and demographics. Without widespread AI literacy, regulatory and governance efforts risk being outpaced by innovation.

This makes the paper especially relevant to those shaping or responding to emerging AI regulations and frameworks. It’s both a call to action and a roadmap for building a more informed, resilient society in the age of intelligent systems.

Source: CTFE link

3. AI in 2025: From Reasoning Machines to Multimodal Intelligence

The year ahead promises significant advances in artificial intelligence, particularly in areas like reasoning, frontier models, and multimodal capabilities. Large language models are evolving to exhibit more sophisticated forms of human-like reasoning, enhancing their utility across sectors from healthcare to finance.

At the same time, so-called frontier models—exceptionally large and powerful systems—are setting new benchmarks in tasks like image generation and complex decision-making. Multimodal AI, which integrates text, image, and audio inputs, is maturing rapidly and could redefine how machines interpret and respond to the world.

These developments underscore the urgency for updated governance frameworks that can keep pace with AI’s expanding scope and impact. As capabilities grow, so too does the need for greater regulatory clarity and ethical oversight.

Source: Morgan Stanley link

Industry Insights

Case Study: Building a Trustworthy Data Foundation for Responsible AI

Capital One, a major retail bank and credit card provider, has positioned itself at the forefront of responsible AI by investing in a robust, AI-ready data ecosystem. Operating in a highly regulated industry where trust and accuracy is vital, the company recognised early on that scalable, ethical AI requires more than just advanced algorithms—it demands a disciplined approach to data governance and transparency.

In recent years, Capital One has overhauled its data infrastructure to align with its long-term AI vision, focusing on quality, accessibility, and accountability across the entire data lifecycle.

To support this transformation, Capital One implemented a suite of Responsible AI practices, including standardised metadata tagging, active data lineage tracking, and embedded governance controls across cloud-native platforms. These efforts are supported by cross-functional teams that bring together AI researchers, compliance professionals, and data engineers to operationalise fairness, explainability, and bias mitigation.

The results are tangible: Capital One has accelerated the deployment of customer-facing AI solutions—such as fraud detection and credit risk models—while ensuring they meet internal and regulatory standards. By prioritising responsible data management as the foundation for AI, the company is not only enhancing trust with regulators and customers but also driving innovation with confidence.

Key Takeaways:

1. Data governance first: Ethical AI starts with well-governed, high-quality data.

2. Cross-functional collaboration: Aligning compliance, engineering and AI teams is key to operationalising responsibility.

3. Built-in controls, not bolt-ons: Embedding governance into AI systems from the outset enhances both trust and speed to market.

Sources: Forbes link, Capital One link

Upcoming Events

1. Webinar: D&A Leaders: Preparing Your Data for AI Integration – 2 April 2025 3:00 am BST

Gartner's upcoming webinar, "D&A Leaders, Ready Your Data for AI," focuses on equipping data and analytics professionals with strategies to prepare organisational data for effective artificial intelligence integration. The session will cover best practices for data quality management, governance frameworks, and aligning data strategies with AI objectives. Attendees will gain actionable insights to ensure their data assets are primed for AI-driven initiatives, enhancing decision-making and business outcomes.

Register now: Gartner link

2. In-Person Event: AI Breakfast & Roundtable – From AI Proof of Concept to Scalable Enterprise Adoption – 23 April 2025

Leading Point is hosting an exclusive AI Breakfast & Roundtable, bringing together AI leaders from top financial institutions, including banks, insurance firms, and buy-side institutions. This intimate, high-level discussion will explore the challenges and opportunities in scaling AI beyond proof of concept to enterprise-wide adoption.

Key discussion points include overcoming implementation barriers, aligning AI initiatives with business objectives, and best practices for AI success in banking, insurance, and investment management. This event offers a unique opportunity to connect with industry peers and gain strategic insights on embedding AI as a core driver of business value.

Want to be a part of the conversation?

If you are an executive with AI responsibilities in business, risk & compliance, or data contact Rajen Madan or Thushan Kumaraswamy to get a seat at the table.

3. In-Person Event: Smarter Couds, Stronger AI-Driven Innovation, Efficiency and Resilience – 24 April 2025

IDC’s, HPE’s and TCS’s upcoming roundtable Smarter Clouds, Stronger Businesses explores how enterprises can drive innovation and resilience by aligning AI strategies with modern cloud architectures. With a focus on agility, scalability, and performance, the agenda covers best practices for adopting AI-enabled infrastructure, building secure and future-ready cloud environments, and reducing complexity across hybrid ecosystems. Industry experts will share insights on turning cloud investments into long-term business value—enabling organisations to stay competitive in an increasingly data-driven world.

Register now: IDC link

4. In-Person Event: Risk & Compliance in Financial Services - 29 April 2025

The 9th Annual Risk & Compliance in Financial Services Conference brings together senior professionals from firms such as Aviva, Invesco, Lloyds Banking Group and NatWest. This year’s agenda focuses on emerging challenges and innovations in the sector—from the use of AI to enhance compliance and operational resilience, to navigating evolving regulations like DORA and Consumer Duty. With expert-led panels on financial crime, cyber risk, and ESG reporting, attendees can expect forward-looking insights tailored for today’s risk environment.

Register now: Financial IT link

Conclusion

The developments this week reinforce a crucial reality: effective AI governance is about more than setting rules—it’s about ensuring accountability, trust, and long-term resilience. Whether it’s the EU’s regulatory crossroads, the FCA’s push for a more agile oversight model, or the challenges of AI integration in the public sector, one thing is clear: the success of AI depends on the frameworks we build today.

As AI capabilities expand, so too must our approach to regulation, ethics, and education. The road ahead demands collaboration between policymakers, businesses, and technologists to create systems that not only foster innovation but also safeguard society.

We’ll be back in two weeks with more insights. Until then, let’s continue driving the conversation on responsible AI.

The Trusted AI Bulletin #5

Issue #5 – AI at the Edge: Governing the Future of Innovation

Introduction

Welcome to this week’s edition of The Trusted AI Bulletin, where we unpack the latest developments in AI governance, regulation, and adoption.

This week, we’re diving into OpenAI’s push for federal AI regulations, the launch of new compliance standards for bank-fintech partnerships, and the stark warnings from Turing Award winners about the unsafe deployment of AI models. As governments and businesses grapple with the dual demands of innovation and accountability, the conversation around responsible AI is reaching a critical inflection point.

The rapid evolution of AI is forcing a reckoning: how do we balance the need for speed and competitiveness with the imperative to build safeguards that protect society? From the financial sector’s embrace of AI-driven tools to IKEA’s leadership in ethical AI governance, the stories this week highlight both the opportunities and the risks of this transformative technology.

Key Highlights of the Week

1. OpenAI Appeals to White House for Unified AI Regulations Amidst State-Level Disparities

OpenAI has formally requested the White House to intervene against a patchwork of state-level AI regulations, advocating for a cohesive federal framework to govern artificial intelligence. This move underscores the company's concern that disparate state laws could stifle innovation and create compliance challenges.

Notably, OpenAI's Chief Global Affairs Officer, Chris Lehane, has highlighted the urgency of accelerating AI policy under the current administration, shifting from merely advocating regulation to actively promoting policies that bolster AI growth and maintain the U.S.'s competitive edge over nations like China.

In a 15-page set of policy suggestions released on Thursday, OpenAI argued that the hundreds of AI-related bills currently pending across the U.S. risk undercutting America's technological progress at a time when it faces renewed competition from China. The company proposed that the administration consider providing relief for AI companies from state rules in exchange for voluntary access to their models.

Source: Bloomberg link

2. CFES Unveils New Standards to Strengthen Compliance in Bank-Fintech Partnerships

The Coalition for Financial Ecosystem Standards (CFES) announced in a press release this week the launch of a new industry framework aimed at strengthening compliance and risk management in bank-fintech partnerships. The STARC framework, comprising 54 standards, sets a benchmark for key areas such as anti-money laundering (AML), third-party risk, and operational compliance, providing financial institutions with a structured rating system to assess their maturity.

To support adoption, CFES has also established an Advisory Board featuring key industry players like the Independent Community Bankers of America (ICBA) and the American Fintech Council (AFC). With regulators increasing scrutiny on fintech partnerships, these standards could play an important role in helping firms navigate compliance without stifling innovation.

As artificial intelligence continues to reshape financial services, frameworks like STARC offer a structured approach to ensuring transparency and accountability.

Source: Press release PDF link, CFES Standards link

3. Turing Award winners warn over unsafe deployment of AI models

AI pioneers Andrew Barto and Richard Sutton have strongly criticised the industry’s reckless approach to deploying AI models, warning that companies are prioritising speed and profit over responsible engineering. They argue that releasing untested AI systems to millions without safeguards is a dangerous practice, likening it to building a bridge and testing it by sending people across.Their work, which underpins major advancements in machine learning, has fuelled the rise of AI powerhouses such as OpenAI and Google DeepMind.

The pair, who have been awarded the 2024 Turing Award for their foundational contributions to artificial intelligence, have expressed serious concerns that AI development is being driven by business incentives rather than a focus on safety. Barto criticised the industry’s approach, stating, “Releasing software to millions of people without safeguards is not good engineering practice,” while Sutton dismissed the idea of artificial general intelligence (AGI) as mere “hype.” As AI investment reaches unprecedented levels, their warnings highlight the growing tensions between rapid technological advancement and the urgent need for stronger governance and regulatory oversight.

Source: FT link

Featured Articles

1. How Artificial Intelligence is Shaping the Future of Banking and Finance

The financial services sector is experiencing a significant transformation through the integration of artificial intelligence (AI), with investments projected to escalate from $35 billion in 2023 to $97 billion by 2027, reflecting a compound annual growth rate of 29%.

Leading institutions such as Morgan Stanley and JPMorgan Chase have introduced AI-driven tools to enhance operational efficiency and client services. In the immediate term, AI co-pilots are streamlining workflows, while always-on AI web crawlers and automation of unstructured data tasks are providing real-time insights and reducing manual processes.

Looking ahead, AI's potential to revolutionise risk management and customer experience through the use of synthetic data is becoming increasingly evident. Fintech companies are at the forefront of this evolution, democratising AI capabilities and enabling smaller financial institutions to compete effectively. This rapid AI adoption underscores the urgency for robust AI governance and regulatory frameworks to ensure ethical implementation and maintain public trust.

Source: Forbes link

2. Mandatory AI Governance: Gartner Predicts Worldwide Regulatory Adoption by 2027

According to Gartner's research, by 2027, AI governance is expected to become a mandatory component of national regulations worldwide. This projection underscores the escalating concerns surrounding data security and the imperative for robust governance frameworks in the rapidly evolving AI landscape.

Notably, Gartner anticipates that over 40% of AI-related data breaches could stem from cross-border misuse of generative AI, highlighting the critical need for cohesive ethical governance. The absence of such frameworks may result in organisations failing to realise the anticipated value of their AI initiatives.

This development signals a pivotal shift towards more stringent AI oversight, emphasising the necessity for organisations to proactively adopt comprehensive governance strategies to mitigate risks and ensure compliance with forthcoming regulatory standards.

Source: CDO Magazine link

3. Balancing Control and Collaboration: Five Essential Layers of AI Sovereignty

The concept of AI sovereignty extends far beyond data localisation or regulatory compliance, requiring a multi-layered approach to ensure true independence.

Five key layers define AI sovereignty: legal and regulatory control, resource and technical independence, operational autonomy, cognitive sovereignty over AI models and algorithms, and cultural influence in shaping public perception and ethical norms. Each layer plays a crucial role in balancing national or organisational control with global collaboration, ensuring AI aligns with strategic interests while maintaining adaptability.

Without a structured approach to sovereignty, reliance on external AI infrastructure and governance could pose significant risks to security, competitiveness, and ethical oversight. As AI regulations evolve, this framework highlights the need for a proactive, layered strategy to navigate the complexities of AI governance effectively.

Source: Anthony Butler link

Industry Insights

Case Study: IKEA’s responsible AI governance

As AI becomes increasingly embedded in business operations, IKEA has taken a proactive and structured approach to AI governance, ensuring ethical and responsible deployment. Recognising the potential risks of AI alongside its benefits, IKEA introduced its first digital ethics policy in 2019, laying the foundation for responsible AI development.

By 2021, the company had established a dedicated AI governance framework, with a multidisciplinary team overseeing compliance, risk management, and ethical considerations. This governance model ensures that AI is used transparently, fairly, and in alignment with business goals.

Key areas of focus include enhancing employee productivity, optimising supply chains, and improving customer experiences—all while maintaining strict ethical standards. Additionally, IKEA’s AI literacy programme is designed to empower employees with the skills needed to navigate AI responsibly, reinforcing the company’s commitment to human-centric innovation.

Key Takeaways:

1. AI Governance as a Business Imperative: Rather than treating AI governance as a regulatory checkbox, IKEA integrates responsible AI principles into its core business strategy. This ensures that AI-driven innovations align with ethical considerations and organisational priorities.

2. Proactive Regulatory Compliance: IKEA’s commitment to responsible AI extends to early compliance with the EU AI Act. As a signatory of the AI Pact, the company is ahead of regulatory requirements, demonstrating leadership in ethical AI governance.

3. Empowering Employees Through AI Education: Understanding that responsible AI usage starts with people, IKEA has launched an AI literacy programme to train 30,000 employees in 2024. This initiative fosters a culture of accountability and awareness, reducing risks associated with AI adoption.

By prioritising governance, education, and ethical AI integration, IKEA is setting a benchmark for responsible AI adoption in the retail sector, ensuring that technological advancements serve both business needs and societal good.

Sources: CIO Dive ink, Global Loyalty Organisation link

Upcoming Events

1. In-Person Event: AI for CFOs - Minimise Risk to Maximise Returns - 25 March 2025

On March 25th, 2025, The Economist is hosting the AI for CFOs event in London, focusing on how finance leaders can leverage artificial intelligence to enhance corporate performance. Attendees will explore AI's role in delivering real-time insights, improving forecasting accuracy, automating compliance, and strengthening data security. This event offers a valuable opportunity to connect with industry experts and discover actionable strategies for integrating AI into financial operations.

Register now: The Economist link

2. Webinar: Strategies and Solutions for Unlocking Value from Unstructured Data - 27 March 2025

A-Team Insight’s upcoming webinar, Strategies and Solutions for Unlocking Value from Unstructured Data, will explore how firms can harness the vast potential of unstructured data—emails, customer feedback, and other text-based information—to drive smarter decision-making and gain a competitive edge. Industry experts will share practical approaches to extracting insights, improving operational efficiency, and uncovering new business opportunities. If you're looking to turn your organisation’s unstructured data into a valuable asset, this session is not to be missed.

Register now: A-Team Insight link

3. Webinar: Five Essential Tips for Successful AI Adoption - 15 April 2025

This webinar, focuses on the critical role of data quality in AI success. As businesses rush to integrate AI, experts will discuss why clean, structured, and well-governed data must be a top priority to avoid AI becoming a liability. The session will cover key topics such as data governance, security, privacy, ethical considerations, and how to maximise AI ROI. Attendees will gain executive-level strategies to ensure AI delivers meaningful business impact.

Register now: CIO Dive link

4. In-Person Event: AI Breakfast & Roundtable – From AI Proof of Concept to Scalable Enterprise Adoption – 23 April 2025

Leading Point is hosting an exclusive AI Breakfast & Roundtable, bringing together AI leaders from top financial institutions, including banks, insurance firms, and buy-side institutions. This intimate, high-level discussion will explore the challenges and opportunities in scaling AI beyond proof of concept to enterprise-wide adoption.

Key discussion points include overcoming implementation barriers, aligning AI initiatives with business objectives, and best practices for AI success in banking, insurance, and investment management. This event offers a unique opportunity to connect with industry peers and gain strategic insights on embedding AI as a core driver of business value.

Want to be a part of the conversation?

If you are an executive with AI responsibilities in business, risk & compliance, or data contact Rajen Madan or Thushan Kumaraswamy to get a seat at the table.

Conclusion

The stories this week underscore a critical truth: AI governance isn’t just about compliance—it’s about building trust. From OpenAI’s push for federal oversight to IKEA’s ethical framework, the focus is shifting from rapid adoption to responsible deployment. The warnings from Turing Award winners Barto and Sutton are a stark reminder: innovation without safeguards is a risk we can’t afford.

As AI’s influence grows, the challenge is clear—businesses and policymakers must act now to bridge governance gaps, prioritise transparency, and ensure AI serves society as much as it drives progress. The future of AI depends on the choices we make today.

We’ll be back in two weeks with more insights. Until then, let’s keep pushing for a future where AI works for everyone.

The Trusted AI Bulletin #4

Issue #4 – Regulating AI: Balancing Innovation, Risk, and Global Influence

Introduction

Welcome to this edition of The Trusted AI Bulletin, where we explore the latest shifts in AI governance, regulation, and adoption. This week, we examine the UK’s evolving AI policy, the growing tensions between Big Tech and European regulators, and the strategic choices shaping AI’s future. With governments reassessing their regulatory approaches and businesses navigating complex compliance landscapes, the conversation around responsible AI is more urgent than ever.

AI adoption requires firms to focus on key capabilities, baseline their AI maturity, and articulate AI risks more effectively. Discussions with executives highlight a gap between the C-suite and AI leads, making governance alignment a critical success factor.

Whether you’re a policymaker, business leader, or AI enthusiast, our curated insights will help you stay informed on the key trends shaping the future of AI.

Key Highlights of the Week

1. UK Postpones AI Regulation to Align with US Policies

The UK government has postponed its anticipated AI regulation bill, originally slated for release before Christmas, now expected in the summer. This delay aims to align the UK's AI policies with the deregulatory stance of President Trump's administration, which has recently dismantled previous AI safety measures. Ministers express concern that premature regulation could deter AI businesses from investing in the UK, especially as the US adopts a more laissez-faire approach.

This strategic shift underscores the UK's intent to remain competitive in the global AI landscape, particularly against the backdrop of the EU's stricter regulatory proposals. However, this move has sparked debate over the balance between fostering innovation and ensuring ethical AI development.

Our take: This as a critical moment for businesses to take a proactive approach to AI governance rather than waiting for regulatory clarity. Firms must self-regulate by adopting strong AI controls and risk frameworks to ensure ethical and responsible AI deployment.

Source: The Guardian link

2. Big Tech vs Brussels: Silicon Valley Ramps Up Fight Against EU AI Rules

Silicon Valley’s biggest players, led by Meta, are intensifying their efforts to weaken the EU’s stringent AI and digital market regulations—this time with backing from the Trump administration. Lobbyists see an opportunity to pressure Brussels into softening enforcement of the AI Act and Digital Markets Act, with Meta outright refusing to sign up to the EU’s upcoming AI code of practice. The European Commission insists it will uphold its rules, but its recent decision to drop the AI Liability Directive suggests some willingness to compromise. If European regulators waver, it could set a dangerous precedent, emboldening Big Tech to dictate the terms of global AI governance.

Source: FT link

3. AI Safety Institute Rebrands, Drops Bias Research

The UK government has rebranded its AI Safety Institute, now called the AI Security Institute, shifting its focus away from AI bias and free speech concerns. Instead, the institute will prioritise cybersecurity threats, fraud prevention, and other high-risk AI applications. This move aligns the UK's AI policy more closely with the U.S. and has sparked debate over whether deprioritising bias research could have unintended societal consequences.

Our take: Bias and fairness remain core AI governance challenges. Firms need to go beyond regulatory mandates and build internal frameworks that address bias and transparency, ensuring trust in AI applications.

Should AI regulation focus solely on security threats, or is ignoring bias a step backward in responsible AI governance?

Source: UK Gov link

Featured Articles

1. UK AI Regulation Must Balance Innovation and Responsibility

The UK government’s approach to AI regulation will play a crucial role in shaping economic growth. The challenge lies in ensuring AI is safe, fair, and reliable without imposing rigid constraints that could stifle innovation. A risk-based, principles-driven framework—similar to the EU’s AI Act—offers a way forward, allowing adaptability while maintaining accountability. The real test will be whether regulation fosters trust and responsible AI use or becomes an obstacle to progress. Governance should encourage businesses to integrate ethical AI practices, not just comply with rules.

Our take: Striking this balance will be key to ensuring AI drives long-term economic and technological advancement. Firms shouldn’t wait for regulatory clarity. Assessing AI risks, implementing governance frameworks, and ensuring transparency now will give organisations a competitive edge.

Source: The Times link

2. Addressing Data and Expertise Gaps in AI Integration

In the rapidly evolving landscape of artificial intelligence, organisations face significant hurdles in adoption, notably concerns about data accuracy and bias, with nearly half of respondents expressing such apprehensions. Additionally, 42% of enterprises report insufficient proprietary data to effectively customise AI models, underscoring the need for robust data strategies. A similar percentage highlights a lack of generative AI expertise, pointing to a critical skills gap that must be addressed.

Moreover, financial justification remains a challenge, as organisations struggle to quantify the return on investment for AI initiatives. These challenges are particularly pertinent in the context of AI governance and regulation, emphasising the necessity for comprehensive frameworks to ensure ethical and effective AI deployment.

Source: IBM link

3. Global AI Compliance Made Easy – A Must-Have Tracker for AI Governance

The Global AI Regulation Tracker developed by Raymond Sun, is a powerful, interactive tool that keeps you ahead of the curve on AI laws, policies, and regulatory updates worldwide. With a dynamic world map, in-depth country profiles, and a live AI newsfeed, it provides a one-stop resource for navigating the complex and evolving AI governance landscape. Updated regularly, it ensures you never miss a critical regulatory shift that could impact your business or compliance strategy. Stay informed, stay compliant, and turn AI regulation into a competitive advantage.

![]()

Source: Techie Ray link

4. Breaking Down Barriers: Strategies for Successful AI Adoption

Artificial intelligence holds immense promise for revolutionising business operations, yet a staggering 80% of AI initiatives fall short of expectations. This high failure rate often stems from challenges such as subpar data quality, organisational resistance, and a lack of robust leadership support.

To navigate these obstacles, companies must prioritise comprehensive data management, foster a culture open to change, and ensure active engagement from leadership. Moreover, aligning AI projects with clear business objectives and investing in employee training are pivotal steps towards realising AI's full potential. Without addressing these critical areas, organisations risk squandering resources and missing out on the transformative benefits AI offers.

Source: Forbes link

Industry Insights

Case Study: AXA's Ethical AI Integration: Boosting Efficiency and Trust in Insurance

AXA, a global insurance leader, has strategically integrated Artificial Intelligence (AI) into its operations to enhance efficiency and uphold ethical standards. By implementing a dedicated AI governance team comprising actuaries, data scientists, privacy specialists, and business experts, AXA ensures responsible AI adoption across its services. This team focuses on creating transparent AI models, safeguarding data privacy, and maintaining human oversight in AI-driven decisions.

A practical application of this strategy is evident in AXA UK's deployment of 13 software bots within their claims departments, which, over six months, saved approximately 18,000 personnel hours and yielded around £140,000 in productivity gains. This initiative not only streamlines repetitive tasks but also reinforces AXA's commitment to ethical AI practices, setting a benchmark for the insurance industry.

Key Outcomes of AI Governance at AXA:

* Operational Efficiency: The introduction of AI bots has significantly reduced manual processing time, enhancing overall productivity.

* Ethical AI Deployment: Establishing a robust governance framework ensures AI applications are transparent, fair, and aligned with societal responsibilities.

* Enhanced Customer Service: Automation of routine tasks allows employees to focus on more complex customer needs, improving service quality.

Sources: Cap Gemini link, AXA link

Upcoming Events

1. Webinar: Augmenting Private Equity Expertise With AI – 6 March 2035

This event aims to explore practical strategies for private equity firms to integrate artificial intelligence, enhancing expertise and uncovering new value sources. Discussions will focus on AI's role in competitive deal sourcing, transforming due diligence processes, and bolstering risk management. As AI continues to reshape the financial landscape, this webinar offers timely insights into aligning technology strategies with business objectives, ensuring AI-driven value creation throughout the investment lifecycle.

Register now: FT Live link

2. Webinar: CIOs, Set the Right AI Strategy in 2025 – 7 March 2025

In this upcoming webinar, Chief Information Officers will gain insights into formulating effective AI strategies that yield measurable outcomes. The session aims to equip CIOs with the tools to navigate the complexities of AI implementation, ensuring alignment with organisational goals and compliance with emerging AI regulations. As AI continues to reshape industries, understanding its governance and regulatory landscape becomes imperative for IT leaders.

Register now: Gartner link

3. In-Person Event: AI UK 2025 Alan Turing Institute – 17 – 18 March 2025

This in-person event brings together experts to explore the latest advancements in artificial intelligence, governance, and regulation. A key highlight of the event is the panel discussion, Advancing AI Governance Through Standards, taking place on 18 March 2025.

Led by The AI Standard Hub, the session will delve into recent developments in AI assurance, global standardisation efforts, and strategies for fostering inclusivity in AI governance. As AI regulations continue to evolve, this discussion offers valuable insights into building a robust AI assurance ecosystem and ensuring responsible AI deployment.

Register now: Turing Institute link

Conclusion

As AI governance takes centre stage, the challenge remains—how do we drive innovation while ensuring transparency, fairness, and accountability? This issue underscores the importance of strategic regulation, ethical AI adoption, and proactive leadership in shaping a future where AI works for businesses and society alike. AI governance is shifting, but businesses can’t afford to wait. AI risks require more effort to understand, firms need to baseline their AI capabilities, and governance gaps between leadership and AI teams must be bridged.

With AI’s influence growing across industries, the need for informed decision-making has never been greater. Whether it’s policymakers refining regulations or organisations refining their AI strategies, the key takeaway is clear: responsible AI isn’t just about compliance—it’s about long-term success.

We’ll be back in two weeks with more insights—until then, let’s continue shaping a responsible AI future together.

The Trusted AI Bulletin #3

Issue #3 – Global AI Crossroads: Ethics, Regulation, and Innovation

Introduction

Welcome to this week’s edition of The Trusted AI Bulletin, where we explore the latest developments, challenges, and opportunities in the rapidly evolving world of AI governance. From global ethical debates to regulatory updates and industry innovations, this week’s highlights underscore the critical importance of balancing innovation with responsibility.

As AI continues to transform industries and societies, the need for robust governance frameworks has never been more urgent. For many organisations, this means not just keeping pace with regulatory change but also taking practical steps—such as bringing key teams together to assess AI usage, ensuring leadership is informed on emerging risks, and building governance frameworks that can evolve alongside innovation.

Join us as we delve into key stories shaping the future of AI governance and examine how organisations and nations are navigating this complex landscape.

Key Highlights of the Week

1. UK and US Withhold Support for Global AI Ethics Pact

At the AI Action Summit in Paris, the UK and US refused to sign a joint declaration on ethical and transparent AI, which was backed by 61 countries, including China and EU nations. The UK cited concerns over a lack of "practical clarity" and insufficient focus on security, while the US objected to language around "inclusive and sustainable" AI. Both governments stressed the need for further discussions on AI governance that align with their national interests. Critics and AI experts warn that this decision is a missed opportunity for democratic nations to take the lead in shaping AI governance, potentially allowing other global powers to set the agenda.

Source: The Times link

2. New PRA Letter Outlines 2025 Expectations for UK Banks

The Prudential Regulation Authority (PRA) has issued a letter outlining its 2025 supervisory priorities for UK banks, focusing on risk management, governance, and resilience. With ongoing market volatility, AI adoption, and geopolitical uncertainty, firms are expected to strengthen their risk frameworks and controls.

Liquidity and funding will also be under scrutiny, as the Bank of England shifts to a new reserve management approach. Meanwhile, banks must demonstrate by March 2025 that they can maintain operations during severe disruptions.

Notably, the Basel 3.1 timeline has been pushed to 2027, giving firms more time to adjust. However, regulatory focus on AI, cyber risks, and data management is set to increase, with further updates expected later this year.

Source: PRA PDF link

3. G42 and Microsoft Launch the Middle East’s First Responsible AI Initiative

G42 and Microsoft have jointly established the Responsible AI Foundation, the first of its kind in the Middle East, aiming to promote ethical AI standards across the Middle East and Global South. Supported by the Mohamed bin Zayed University of Artificial Intelligence (MBZUAI), the foundation will focus on advancing responsible AI research and developing governance frameworks that consider cultural diversity. Inception, a G42 company, will lead the programme, while Microsoft plans to expand its AI for Good Lab to Abu Dhabi. This initiative underscores a commitment to ensuring AI technologies are safe, fair, and aligned with societal values.

Source: G42.ai link

Featured Articles

1. AI is Advancing Fast—Why Isn’t Governance Keeping Up?

Artificial intelligence is evolving at breakneck speed, reshaping industries and daily life, yet a clear governance framework is still missing. Effective policies must be based on scientific reality, not speculation, to address real-world challenges without stifling progress. Striking the right balance between innovation and regulation is crucial, especially as AI’s impact grows. Open access to AI models is key to driving research and ensuring future breakthroughs aren’t limited to a select few. With AI set to transform everything from healthcare to energy, the question remains—can governance keep pace?

Source: FT link

2. The EU AI Act: What High-Risk AI Systems Must Get Right

The EU AI Act imposes stringent obligations on high-risk AI systems, requiring organisations to implement risk management frameworks, ensure data governance, and maintain transparency. CIOs and CDOs must oversee compliance, ensuring human oversight, proper documentation, and clear communication when AI is in use.

A key focus is ensuring AI systems are explainable and auditable, enabling regulators and stakeholders to understand how decisions are made. Non-compliance carries significant financial and operational risks, making early alignment with regulatory requirements essential.

With enforcement approaching, businesses must integrate these rules into their AI strategies to maintain trust, mitigate risks, and drive responsible innovation. To stay ahead, organisations should conduct internal audits, update governance policies, and invest in staff training to embed compliance across AI initiatives. Proactive action now will determine competitive advantage in an AI-regulated future.

Source: A&O Shearman link

3. Building a Data-Driven Culture: Four Essential Pillars for Success

While many organisations collect vast amounts of data, few truly unlock its transformative potential. Success lies in mastering four critical elements: leadership commitment to champion data use, fostering data literacy across teams, ensuring data is accessible and integrated, and establishing trust through robust governance. Without these pillars, even the most data-rich organisations risk inefficiency and missed opportunities. A strong data-driven culture isn’t just about tools—it’s about embedding these principles into the fabric of your organisation.

Source: MIT Sloan link

Industry Insights

Case Study: Ocado’s Approach to Responsible AI Governance

Ocado Group has embedded AI across its operations, from optimising warehouse logistics to enhancing customer experiences. However, as AI adoption scales, so do the risks—unintended biases, unpredictable decision-making, and regulatory challenges. To navigate this, Ocado has placed responsible AI governance at the heart of its strategy, ensuring its models remain transparent, fair, and reliable.

A key component of its AI governance Strategy is its Responsible AI Framework, built around five key principles: Fairness, Transparency, Governance, Robustness, and Impact. This structured approach ensures AI systems are rigorously tested to prevent bias, remain explainable, and function as intended across complex operations.

One tangible success of this framework is Ocado’s real-time AI-powered monitoring, which has led to £100,000 in annual cost savings by automatically detecting and resolving system anomalies. With AI observability tools tracking over 100 microservice applications within its Ocado Smart Platform (OSP), the company can proactively address inefficiencies, minimising downtime and enhancing system reliability.

AI governance ensures Ocado’s AI models remain resilient and accountable, reducing risks associated with unpredictable AI behaviour. By embedding responsible AI principles into its operations, Ocado continues to optimise efficiency, prevent costly errors, and align with evolving regulatory expectations around AI.

Sources: Ocado Group link, Ocado Group link (CDO interview)

Upcoming Events

1. In-Person Event: Microsoft AI Tour - 5 March 2025

The Microsoft AI Tour in London is an event for professionals looking to explore the transformative potential of artificial intelligence. Featuring expert-led sessions, interactive workshops, and live demonstrations, it offers a unique opportunity to dive into the latest AI innovations and their real-world applications. Whether you're looking to expand your knowledge, network with industry leaders, or discover how AI can drive impact, this event is an invaluable experience for anyone invested in the future of technology.

Register now: MS link

2. In-Person Event: IRM UK Data Governance Conference - 17-20 March

The Data Governance, AI Governance & Master Data Management Conference Europe is scheduled for 17–20 March 2025 in London. This four-day event offers five focused tracks, covering topics such as data quality, MDM strategies, and AI ethics. The conference features practical case studies from leading organisations, providing attendees with actionable insights into effective data management practices.

Key sessions include “Navigating the Intersection of Data Governance and AI Governance” and “How Master Data Management can Enable AI Adoption”. Participants will also have opportunities to connect with over 250 data professionals during dedicated networking sessions.

Register now: IRM UK link

3. Webinar: Strategies and solutions for unlocking value from unstructured data - 27 March 2025

Discover how to harness the untapped potential of unstructured data in this insightful webinar. The session will explore practical strategies and innovative solutions to extract actionable insights from data sources like emails, documents, and multimedia. Attendees will gain valuable knowledge on overcoming challenges in data management, leveraging advanced technologies, and driving business value from previously underutilised information.

Register now: A-Team Insight link

Conclusion

As we wrap up this edition of The Trusted AI Bulletin, it’s clear that the journey toward ethical and effective AI governance is both challenging and essential. From the UK and US withholding support for a global AI ethics pact to Ocado’s pioneering approach to responsible AI, the stories this week highlight the diverse perspectives and strategies shaping the future of AI.

While progress is being made, the road ahead demands collaboration, innovation, and a shared commitment to ensuring AI benefits all of humanity. For organisations looking to act now, investing in education, cross-functional AI collaboration, and a clear governance roadmap will be key to staying competitive in an AI-regulated future.

Stay tuned for more updates, and let’s continue working together to build a future where AI is not only powerful but also fair, transparent, and accountable.

The Trusted AI Bulletin #2

Issue #2 – AI Investment, Ethics & Compliance Trends

Introduction

Welcome to this edition of The Trusted AI Bulletin, where we bring you the latest developments in enterprise AI risk management, adoption, and ethical AI practices.

This week, we examine how tech giants are investing billions into AI innovation, the growing global alignment on AI safety, and why passive data management is no longer viable in an AI-driven world.

With AI becoming an integral part of finance, healthcare, and other critical industries, strong governance frameworks are essential to ensure trust, transparency, and long-term success.

From real-world case studies like DBS Bank’s AI journey to upcoming industry events, this issue gives you insights to help you stay ahead in the evolving AI landscape.

Key Highlights of the Week

1. Stargate: America's $500 Billion AI Power Play

The United States unveiled the Stargate Project, a $500 billion initiative over the next four years to establish the world's most extensive AI infrastructure and secure global dominance in the field. Led by OpenAI, Oracle, and SoftBank, with backing from President Trump, the project plans to build 20 massive data centres across the U.S., starting with a 1 million-square-foot facility in Texas. Beyond advancing AI capabilities, Stargate is also a strategic move to attract global investment capital, potentially limiting China’s access to AI funding. With its $500 billion commitment far surpassing China’s $186 billion AI infrastructure spending to date, the U.S. is making a bold play to corner the market and maintain its technological edge.

Source: Forbes link

2. China’s AI Firms Align with Global Safety Commitments, Signalling Convergence in Governance

Chinese AI companies, including DeepSeek, are rapidly advancing in the global AI race, with their latest models rivalling top Western counterparts. In a notable shift, 17 Chinese firms have signed AI safety commitments similar to those adopted by Western companies, signalling a growing alignment on governance principles. This convergence highlights the potential for international collaboration on AI safety, despite ongoing geopolitical competition. As AI development accelerates, upcoming forums like the Paris AI Action Summit may play a crucial role in shaping global AI governance.

Source: Carnegie Endowment research link

3. Lloyds Banking Group Expands AI Leadership with New Head of Responsible AI

Lloyds Banking Group has appointed Magdalena Lis as its new Head of Responsible AI, reinforcing its commitment to ethical AI development. With over 15 years of experience, including advisory roles for the UK Government and leadership at Toyota Connected Europe, Lis will focus on ensuring AI safeguards while advancing innovation. This move follows the appointment of Dr. Rohit Dhawan as Director of AI and Advanced Analytics in 2024, as Lloyds continues to grow its AI Centre of Excellence, now comprising over 200 specialists. As AI reshapes banking, Lloyds aims to balance technological advancement with responsible implementation.

Source: FF News link

Featured Articles

1. Why Passive Data Management No Longer Works in the AI Era

The days of passive data management are over—AI-driven organisations need a proactive approach to governance. Chief Data Officers (CDOs) must ensure that data is high-quality, well-structured, and compliant to fully unlock AI’s potential. This means implementing automation, real-time monitoring, and stronger governance frameworks to mitigate risks while enhancing decision-making. Without these measures, businesses risk falling behind in an increasingly AI-powered world. The article explores how CDOs can take control of their data strategy to drive innovation and maintain regulatory compliance.

Image source: Image generated using ImageFX

Source: Medium link

2. How Governance & Privacy Can Safeguard AI Development

As AI adoption accelerates, so do concerns over data exposure, compliance failures, and reputational damage. Informatica warns that without strong governance and privacy policies, organisations risk losing control over sensitive information. Proactive data management, human oversight, and clear accountability are crucial to ensuring AI is both powerful and responsible. Businesses must not only understand the data fuelling their AI models but also implement safeguards to prevent unintended consequences. In an AI-driven world, those who neglect governance may find themselves facing serious risks.

Source: A-Team Insight link

3. AI Literacy: The Key to Staying Ahead in an AI-Driven World

AI is transforming industries, but do your teams truly understand how to use it responsibly? Without proper AI literacy, businesses risk compliance failures, biased decision-making, and missed opportunities. A well-designed AI training programme helps employees navigate regulations, mitigate risks, and unlock AI’s full potential. From assessing knowledge gaps to tailoring content for different roles, the right approach ensures AI is used strategically and ethically. As AI continues to evolve, organisations that prioritise education will be better equipped to adapt and thrive.

Source: IAPP link

Industry Insights

Case Study: DBS Bank - AI Success Rooted in Robust Governance Framework

Harvard Business School’s recent case study on DBS Bank highlights the critical role of AI governance in executing a successful AI strategy. Headquartered in Singapore, DBS embarked on a multi-year digital transformation under CEO Piyush Gupta in 2014, incorporating AI to enhance business value and customer experience. As AI adoption scaled, DBS developed its P-U-R-E framework—emphasising Purposeful, Unsurprising, Respectful, and Explainable AI—to ensure ethical and responsible AI deployment. This governance-first approach has been instrumental in managing risks while maximising AI’s potential across banking operations.

In 2022, DBS began exploring Generative AI (Gen AI) use cases, adapting its governance frameworks to balance innovation with emerging risks. By leveraging its existing AI capabilities, the bank continues to integrate AI responsibly while maintaining regulatory compliance and trust.

Key Outcomes of AI Governance at DBS:

o Economic Impact: DBS anticipates its AI initiatives will generate over £595 million in economic benefits by 2025, following consecutive years of doubling impact.

o Enhanced Customer Experience: AI-driven hyper-personalised prompts assist customers in making better investment and financial planning decisions.

o Employee Development: AI supports employees with tailored career and upskilling roadmaps, fostering long-term career growth.

Sources: DBS Bank news link

Upcoming Events

1. Webinar: Transforming Banking with GenAI – 13 February 2025

Join the Financial Times for a webinar exploring the transformative potential of Generative AI (GenAI) in the banking sector. Industry leaders will discuss the latest GenAI applications, including synthetic data and self-supervised learning, and provide strategies for navigating the rapidly evolving AI landscape. Key topics include revolutionising core banking operations, building robust data strategies, and reskilling workforces for future challenges.

Register now: FT link

2. Webinar: AI Maturity & Roadmap: Accelerate Your Journey to AI Excellence – 27 February 2025

Gartner is hosting a webinar focusing on assessing AI maturity and exploring the transformative potential of AI within organisations. The session will utilise Gartner's AI maturity assessment and roadmap tools to outline key practices across seven workstreams essential for achieving AI success at scale. Attendees will gain insights into managing and prioritising activities to harness AI's full potential.

Register now: Gartner link

3. Webinar: What Do CIOs Really Care About? – 13 March 2025

Join IDC for an insightful webinar exploring the evolving priorities of Chief Information Officers in the digital era. The session will delve into how CIOs are balancing innovation with pragmatism, transitioning from traditional IT management to strategic leadership roles that drive business transformation. Attendees will gain perspectives on aligning technology initiatives with organisational goals and the critical role of CIOs in today's rapidly changing technological landscape.

Register now: IDC link

Conclusion

Implementing Trusted AI isn’t just a regulatory requirement—it’s a business imperative. As organisations integrate AI into critical decision-making, ensuring trust, transparency, and compliance will define long-term success. By staying informed on evolving policies, adopting strong governance frameworks, and fostering ethical AI practices, businesses can harness AI’s full potential while managing risks.

We’d love to hear your thoughts! Join the conversation, share your perspectives, and stay engaged with us as we navigate the future of responsible AI together.

See you in the next issue!

Rajen Madan

Thushan Kumaraswamy

The Trusted AI Bulletin #1

Issue #1 – AI Advancements and Regulatory Shifts

Introduction

Welcome to the inaugural edition of The Trusted AI Bulletin! As artificial intelligence continues to reshape industries, the importance of robust risk management, deployment processes, transparency and ethical oversight on AI cannot be overstated.

At Leading Point our mission is to help those responsible for implementing AI in enterprises deliver trusted, rapid AI innovations while removing the blockers – be it uncertainty around AI value, lack of trust with AI outputs or user adoption.

This newsletter is your bi-weekly guide to staying informed, inspired, and ahead of the curve in navigating the challenges with AI deployment and realise the opportunity of AI in your enterprise.

Key Highlights of the Week

1. AI Innovations in Financial Services

The UK’s AI sector continues to grow, attracting £200 million in daily private investment since July 2024, with notable contributions like CoreWeave’s £1.75 billion data centre investment. These advancements underscore the transformative potential of AI in sectors such as financial services. From cutting-edge AI models to emerging data infrastructure, staying ahead of these innovations is essential for leaders navigating this rapidly evolving space.

Source: UK Government link

2. UK AI Action Plan

The UK government has officially approved a sweeping AI action plan aimed at establishing a robust economic and regulatory framework for artificial intelligence. The plan focuses on ensuring AI is developed safely and responsibly, with a strong emphasis on promoting innovation while addressing potential risks. Key priorities include creating clear guidelines for AI governance, fostering collaboration between government and industry, and ensuring the UK remains a global leader in AI development. This action plan marks a significant step towards creating a balanced approach to AI regulation.

Source: Artificial Intelligence News link

3. Tech Nation to launch London AI Hub

Brent Hoberman’s Founder’s Forum, announced the London AI Hub in collaboration with European AI group Merantix, Onfido and Quench.ai founder Husayn Kassai and flexible office provider Techspace. The initiative aims to bring together a fragmented sector. Hoberman said the hub would act as a “physical nucleus for meaningful collaboration across founders, investors, academics, policymakers and innovators.

Source: UK Tech News link

Featured Articles

1. 10 AI Strategy Questions Every CIO Must Answer

Artificial intelligence is transforming industries, and CIOs play a key role in aligning AI initiatives with business objectives. The article outlines 10 critical questions that every CIO must answer to ensure successful AI strategy, from building governance frameworks to implementing ethical AI.

Source: CIO.com link

2. AI Regulations, Governance, and Ethics for 2025

The global landscape for AI regulation is evolving rapidly, with regions adopting diverse approaches to governance and ethics. In the UK, a traditionally light-touch, pro-innovation approach is now shifting toward proportionate legislation focused on managing risks from advanced AI models. With upcoming proposals and the UK AI Safety Institute’s pivotal role in global risk research, the country aims to balance innovation with safety.

Source: Dentons link

Industry Insights

Case Study: Mastercard

Mastercard’s commitment to ethical AI governance acts as a core part of its innovation strategy. Recognising the potential risks of AI, Mastercard developed a comprehensive framework to ensure its AI systems align with corporate values, societal expectations, and regulatory standards. This approach highlights the growing importance of AI governance in fostering trust, minimising risks, and enabling responsible innovation.

Key elements of Mastercard’s AI governance strategy include:

o Transparency and accountability: Regular audits and cross-functional oversight ensure AI systems operate fairly and responsibly.

o Ethical principles in practice: AI systems are designed to uphold fairness, privacy, and security, balancing innovation with societal and corporate responsibilities.

This case underscores how robust AI governance can help organisations navigate the complexities of AI deployment while maintaining trust and ethical integrity.

Source: IMD link

Upcoming Events

1. Webinar: A CISO Guide to AI and Privacy – 21 January 2025

Explore how to develop effective AI policies aligned with industry best practices and emerging regulations in this insightful webinar. Maryam Meseha and Janelle Hsia will discuss ethical AI use, stakeholder collaboration, and balancing business risks with opportunities. Learn how AI can enhance cybersecurity and drive innovation while maintaining compliance and trust.

Register now: Brighttalk link

2. The Data Advantage – Smarter Investments in Private Markets – 28 January 2025

This event, run by Leading Point, focuses on the transformative role of data and technology in private markets, bringing together investors, data professionals, and market leaders to explore smarter investment strategies. Key discussions will cover leveraging data-driven insights, integrating advanced analytics, and enhancing decision-making processes to maximise returns in private markets.

Register now: Eventbrite link

3. The Data Management Summit 2025 – 20 March 2025

The Data Management Summit London is a premier event bringing together data leaders, regulators, and technology innovators to discuss the latest trends and challenges in data management, particularly in financial services. Key topics include data governance, ESG data, cloud strategies, and leveraging AI and advanced analytics to drive innovation while maintaining regulatory compliance. It’s an excellent opportunity to network and learn from industry leaders.

Register now: A-Team Insight link

Conclusion